| Year | 2014 |

| Credits | ben simonstechnical directordata visualisation |

| 3D Stereo | No |

| Tags | data viz houdini mocap optitrack |

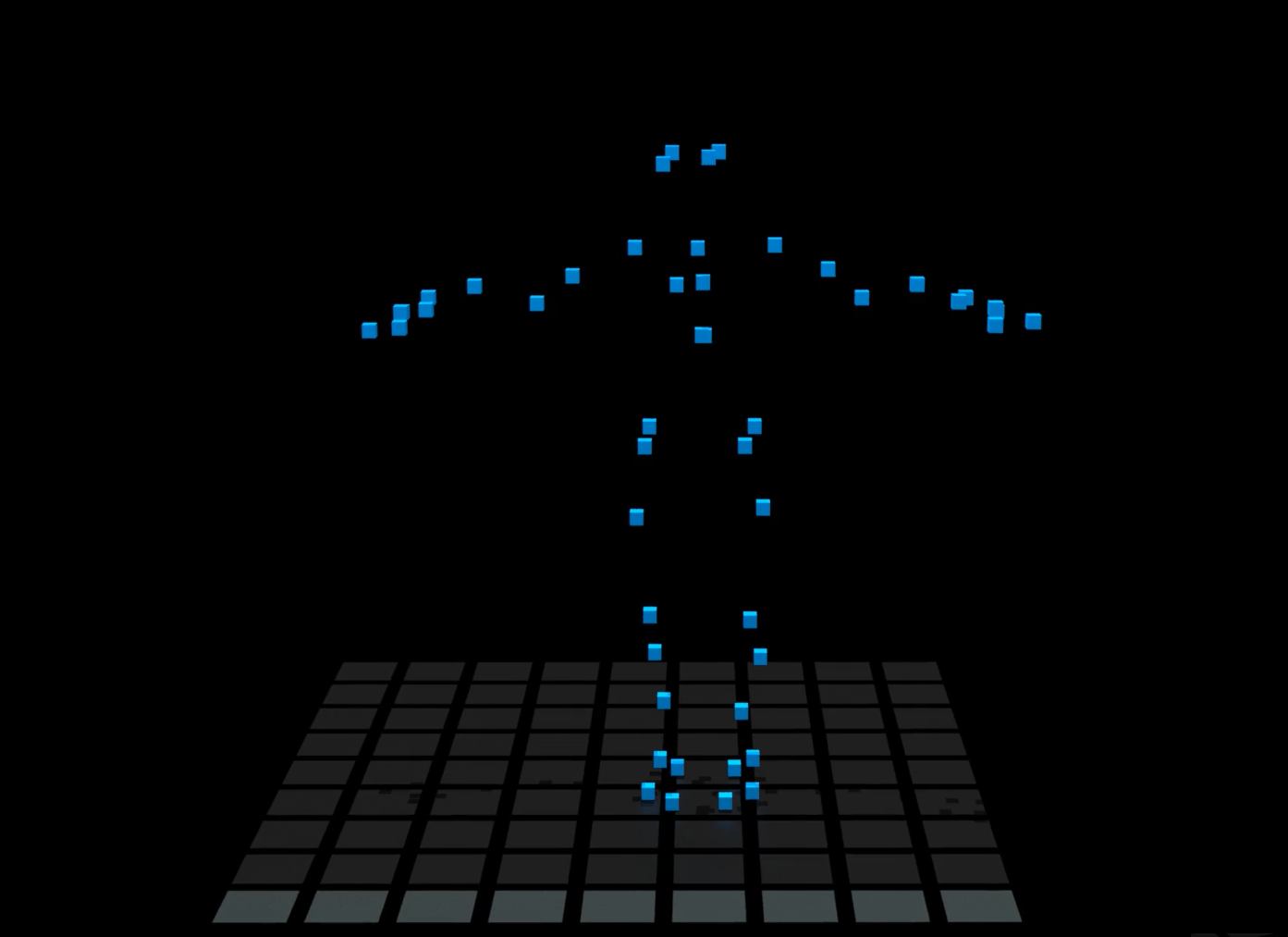

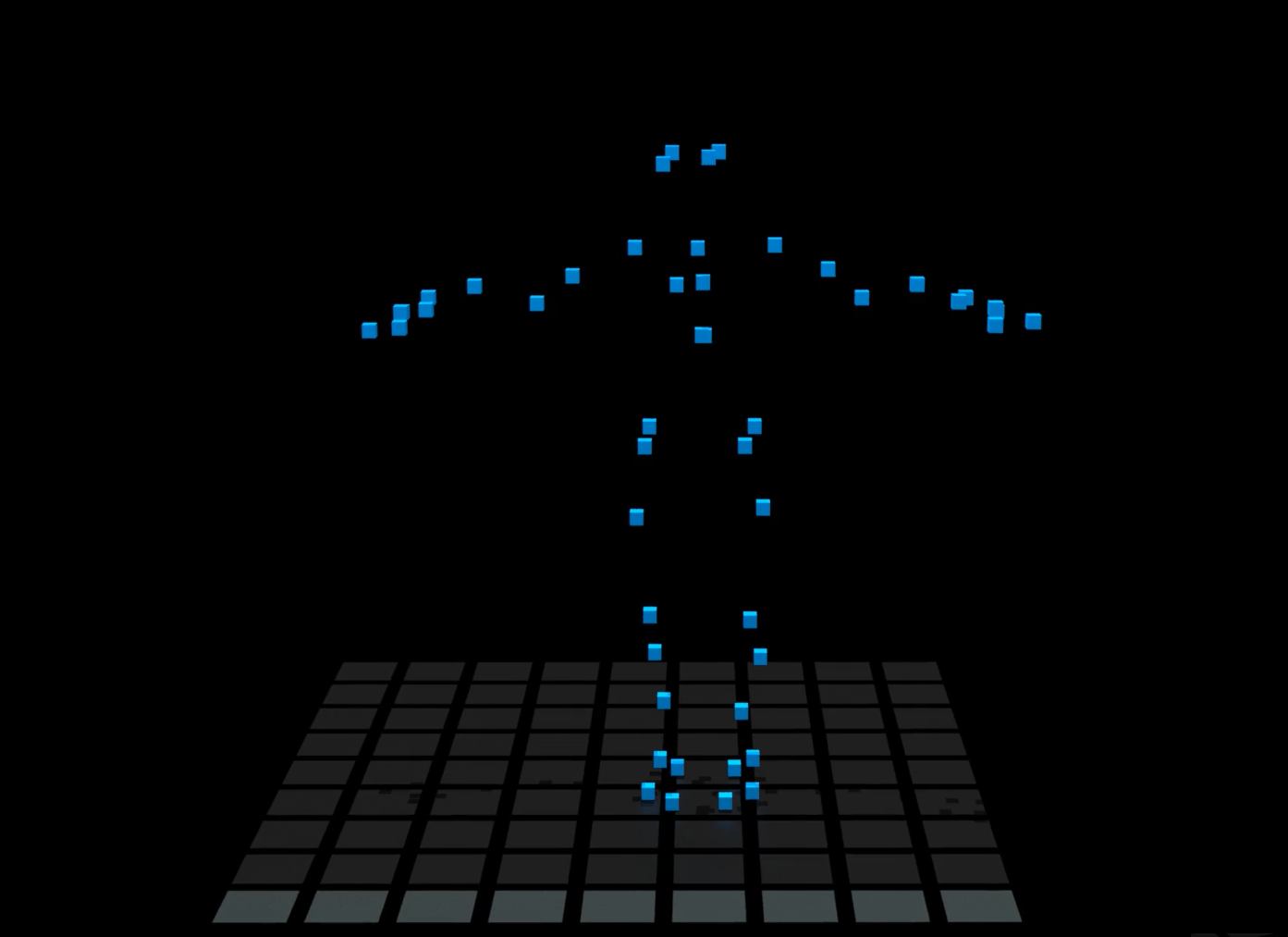

Motion Capture Visualisation

The UTS Data Arena has an Optitrack motion capture system installed in the theatre. Twelve (12) cameras are set at the top rim of the 360-degree cylindrical screen. They track infrared marker balls, attached to a full-body suit (as seen here) or simple marker cards. The cards hold 3 infrared reflective balls arranged into unique combinations, sometimes used as a 3D mouse.

There are 20 different combinations of the 3-ball cards, allowing 20 unique "mice" to be located and tracked in the theatre.

The (x,y,z) location precision is approximately 3mm. Cards with 3 balls make a triangle, which means we can also infer the orientation of the card. We can tell which way the card is pointing.

Having 20 tracked cards in the theatre allows us to develop multi-user input. Everyone gets their own 3D mouse.

Tracking a card's orientation allows motion gestures to be used. For example, a twist of 180-degrees can be interpreted as a button-press. Move the marker card in space to select items on screen. Co-operate with other users.

In this case (see videos) we have one person wearing a tracking suit, covered in marker balls. The 12 cameras locate each marker, compare then triangulate to determine their positions in (x,y,z).

The (x,y,z) position for each ball is recorded at 50Hz (50 positions per second). Imagine this as a spreadsheet, with 3 columns for X, Y, and Z. Sampling at 50Hz means there are 50 spreadsheet rows per second. Say there were 30 tracking marker balls on the suit. Then there would be 30 x 3 = 90 columns of data, and 50 rows per second of recording data.

For example the first video (above) records 46 marker balls (blue boxes). Now 46 x 3 (sets-of-3-columns for each x,y,z) = 138 columns. The video runs for 95 seconds. At 50Hz this means 95sec x 50 rows-per-sec = 4750 rows. Thus the data, seen as a spreadsheet, is 138 columns and 4750 rows. It's a big spreadsheet.

The trails are a trade-off between time and space

Imagine only the blue markers were drawn, by themselves (see right). That is, there were no orange trails. Searching for any tracking errors, noise, or wobbles in the motion would require a High Cognitive Load. You would have to look very carefully. It's difficult to watch all the markers, moving all at once.

The orange trails reduce the cognitive load. You could look-away and look-back and still have a pretty-good idea of what has happened. Notice smooth motion leaves smooth trails. Tracking errors and motion wobbles are obvious.

Optitrack & Houdini

In fact, this data was obtained from an older tracking system that was setup at UTS in 2014. This is not data from the Optitrack System run in the UTS Data Arena (the optitrack is better).

This visualisation was created in Houdini. The trails are created with a TRAIL SOP. This setup is modular enough to take new motion capture data files. Change the input file to a new motion file and it will create a new movie. In a way this setup can be thought of as a "motion capture data pipeline". While these are movies, the 3D geometry is also created and can be loaded into the Data Arena runtime display system, and viewed in 3D stereoscopic format.

Unreal Engine

More recently we have loaded motion capture data into the Unreal Engine (UE4), and experimented with the Optitrack Tracking Markers being used to interact with 3D environments in UE4.

Laurence Pike in a Mocap Suit, Drumming in the Data Arena

Lawrence Pike Mocap Session for Nero

In June 2020 the well-known Australian Drummer Laurence Pike recorded a Motion Capture Session in the Data Arena (left). This video is a "behind the scenes look" at the making of the new video for ‘Nero’. Notice Laurence is wearing the tracking suit with the (grey) markers.

'Nero' is taken from Laurence Pike's album Prophecy (2020). LP/CD/digital pre-order: https://ffm.to/prophecy.oyd

Interactive video: https://laurencepike.net

Directed by Clemens Habicht http://clemenshabicht.com

Produced by Annabel Stevens, Collider 3D: Hugh Carrick-Allan Interactive: Andrew Wright Motion Capture: Thomas Ricciardiello, UTS Data Arena

Thanks to Gabriel Clark and Zoe Sadokierski (UTS)