| Year | 2015 |

| Credits | Prof Simon Ellingsen (Physics & Radio Astronomy, University of Tasmania)Research Lead Ben SimonsGLIMPSE Data Visualisation (Houdini) |

| Links | |

| 3D Stereo | No |

| Tags | science space spitzer |

Spitzer Space Telescope

To access the demo, download the Data Arena Virtual Machine.

The Research Project

One of the challenges with Big Data research - it's generally difficult to gain access to large data sets. NASA however, is one source of free big data. The Spitzer’s GLIMPSE Survey data set is an example. The Spitzer space telescope orbits Earth, and records infrared radiation. The GLIMPSE Survey data set is made available via a Caltech website. The data is split into approximately 360 files, each file contains around 147 columns of infrared data for 100,000 stars. Most researchers start with one file.

While it’s thought most stars burn hydrogen, there’s a theory they initially burn methanol, earlier in their formation from dense regions of dust and cloud. By locating stars which could be burning methanol as fuel, astrophysicists in Hobart, Tasmania, aim to identify the evolutionary stages through which stars form, and get a better idea of the developing structure of galaxies.

You may recall seeing blue light when copper is placed into a flame? Light can tell you about chemistry. The blue colour tells you the burning material is copper. The same sort of analysis is occuring in the search for stars that burn methanol, except in this case, the data comes from the infra-red spectrum instead of visible light.

In space, dense gas and dust regions focus natural radiation into radio frequency lasers, otherwise known as interstellar masers.

To locate a possible maser in the Spitzer data set, we can perform a calculation based on Simon Ellingsen’s research (UTas Physics),

where we compare four bands of infrared radiation. Let’s call them A, B, C, and D.

Essentially the calculation is looking for a difference across the spectrum. We subtract A-B, and subtract C-D, and then compare those two differences. If you get, say 2 and 2, then the difference is 0 and we conclude the star is burning hydrogen. However, if we get say 1 and 4, then the difference is 3 and we have a candidate Methanol Maser.

The results of these calculations is shown in the image above. The difference for most stars is close to zero (the blue dots). However there are some stars which show a difference; the red dots at the top. These are the needles in the haystack. Some twenty or so Methanol Masers were identified in a data set of 36 million stars.

The Data

The Spitzer Space Telescope Data was downloaded from Caltech. There were hundreds of table files which look like this. They are text files, compressed with gzip and have filenames ending in ".gz". There is a "header" at the top of the file which has a lot of information about where the data came from, and what each column represents. The file we are looking at was named: GLMIA_l283.tbl.gz

The top of the file has this header

\ Galactic Legacy Infrared Mid-Plane Survey Extraordinaire (GLIMPSE)

\ GLIMPSE Point Source Archive (GLMA)

\ Spitzer Space Telescope (SST) - Infrared Array Camera (IRAC)

\ Channel 1: 3.6 microns (um), Ch2: 4.5 um, Ch3: 5.8 um, Ch4: 8.0 um

\ Date This File Written: 20061201 13:24:55

\ SKY Area: l283-285 deg; b +- 1 deg

\ Number of Archive Sources in this Area: 93828

\ Number of Columns in the Archive: 78

\ See the GLIMPSE Data Products Document for Details about the Archive Fields

The column headers appear next

| designation |tmass_designation|tmass_cntr| l | b | dl | db | ra | dec | dra | ddec |csf| mag_J| dJ_m| mag_H| dH_m|mag_Ks| dKs_m|mag3_6| d3_6m|mag4_5| d4_5m|mag5_8| d5_8m|mag8_0| d8_0m| f_J | df_J | f_H | df_H | f_Ks | df_Ks | f3_6 | df3_6 | f4_5 | df4_5 | f5_8 | df5_8 | f8_0 | df8_0 | rms_f3_6 | rms_f4_5 | rms_f5_8 | rms_f8_0 | sky_3_6 | sky_4_5 | sky_5_8 | sky_8_0 | sn_J| sn_H| sn_Ks|sn_3_6|sn_4_5|sn_5_8|sn_8_0|dens_3_6|dens_4_5|dens_5_8|dens_8_0| m3_6| m4_5| m5_8| m8_0| n3_6| n4_5| n5_8| n8_0| sqf_J | sqf_H | sqf_Ks | sqf_3_6 | sqf_4_5 | sqf_5_8 | sqf_8_0 |mf3_6|mf4_5|mf5_8|mf8_0|

| char | char | int | double | double |double|double| double | double |double|double|int| real | real | real | real | real | real | real | real | real | real | real | real | real | real | real | real | real | real | real | real | real | real | real | real | real | real | real | real | real | real | real | real | real | real | real | real | real | real | real | real | real | real | real | real | real | real | real | int | int | int | int | int | int | int | int | int | int | int | int | int | int | int | int | int | int | int |

| | | | deg | deg |arcsec|arcsec| deg | deg |arcsec|arcsec| | mag | mag | mag | mag | mag | mag | mag | mag | mag | mag | mag | mag | mag | mag | mJy | mJy | mJy | mJy | mJy | mJy | mJy | mJy | mJy | mJy | mJy | mJy | mJy | mJy | mJy | mJy | mJy | mJy | MJy/sr | MJy/sr | MJy/sr | MJy/sr | | | | | | | |#/sqamin|#/sqamin|#/sqamin|#/sqamin| | | | | | | | | | | | | | | | | | | |

| | null| 0| | | | | | | | | |99.999|99.999|99.999|99.999|99.999|99.999|99.999|99.999|99.999|99.999|99.999|99.999|99.999|99.999|-9.999E+02|-9.999E+02|-9.999E+02|-9.999E+02|-9.999E+02|-9.999E+02|-9.999E+02|-9.999E+02|-9.999E+02|-9.999E+02|-9.999E+02|-9.999E+02|-9.999E+02|-9.999E+02|-9.999E+02|-9.999E+02|-9.999E+02|-9.999E+02|-9.999E+02|-9.999E+02|-9.999E+02|-9.999E+02| -9.99| -9.99| -9.99| -9.99| -9.99| -9.99| -9.99| -9.9| -9.9| -9.9| -9.9| | | | | | | | | -9| -9| -9| -9| -9| -9| -9| -9| -9| -9| -9|

Notice the values "99.999" above. These are "special numbers" which actually mean "unknown" or "missing data". You might expect a missing value to be "0" - but zero is a very useful and common value. It would be confusing to discern which values were unknown and which were truly zero!

Sometimes "NULL" is used to represent an unknown or invalid value - but not all systems can handle or expect NULLs. So it's 99.999 or -9.9 or -9.999E+02. When this data is graphed, there will be a large number of points all out at 99.999 - so we must remember to cull these.

Moving on to the next lot of lines in this same file were then see the data itself. Only 4 lines are shown here

SSTGLMA G283.8461+00.7628 10253931-5636272 791873126 283.846174 0.762842 0.3 0.3 156.413762 -56.607542 0.3 0.3 0 12.695 0.026 11.896 0.023 11.636 0.024 11.327 0.058 99.999 99.999 99.999 99.999 99.999 99.999 1.332E+01 3.190E-01 1.786E+01 3.784E-01 1.477E+01 3.266E-01 8.274E+00 4.397E-01 -9.999E+02 -9.999E+02 -9.999E+02 -9.999E+02 -9.999E+02 -9.999E+02 4.126E-02 -9.999E+02 -9.999E+02 -9.999E+02 5.830E-01 -9.999E+02 -9.999E+02 -9.999E+02 41.76 47.21 45.24 18.82 -9.99 -9.99 -9.99 25.4 -9.9 -9.9 -9.9 2 0 0 0 2 0 0 0 0 0 0 536887360 -9 -9 -9 12 -9 -9 -9

SSTGLMA G283.8463+00.7993 10254778-5634361 791923152 283.846372 0.799317 0.3 0.3 156.449081 -56.576683 0.3 0.3 0 13.473 0.048 13.412 0.067 13.174 0.052 13.171 0.125 99.999 99.999 99.999 99.999 99.999 99.999 6.506E+00 2.876E-01 4.421E+00 2.728E-01 3.584E+00 1.716E-01 1.514E+00 1.745E-01 -9.999E+02 -9.999E+02 -9.999E+02 -9.999E+02 -9.999E+02 -9.999E+02 0.000E+00 -9.999E+02 -9.999E+02 -9.999E+02 5.700E-01 -9.999E+02 -9.999E+02 -9.999E+02 22.62 16.21 20.88 8.68 -9.99 -9.99 -9.99 27.1 -9.9 -9.9 -9.9 1 0 0 0 1 0 1 0 8192 8192 8192 16448 -9 -9 -9 12 -9 -9 -9

SSTGLMA G283.8474+00.7247 10253097-5638260 791872817 283.847419 0.724742 0.3 0.3 156.379048 -56.640534 0.3 0.3 0 9.587 0.024 8.099 0.047 7.518 0.024 7.079 0.053 99.999 99.999 7.057 0.028 99.999 99.999 2.332E+02 5.154E+00 5.898E+02 2.553E+01 6.557E+02 1.449E+01 4.138E+02 2.032E+01 -9.999E+02 -9.999E+02 1.729E+02 4.408E+00 -9.999E+02 -9.999E+02 5.551E-14 -9.999E+02 1.935E+00 -9.999E+02 5.130E-01 -9.999E+02 3.229E+00 -9.999E+02 45.24 23.10 45.24 20.36 -9.99 39.22 -9.99 26.4 -9.9 8.9 -9.9 1 0 3 0 2 0 3 0 0 0 0 0 -9 536903808 -9 12 -9 12 -9

SSTGLMA G283.8476+00.7107 10252781-5639092 791872700 283.847669 0.710728 0.3 0.3 156.365943 -56.652556 0.3 0.3 0 16.235 0.103 15.411 0.120 15.634 0.233 15.023 0.157 99.999 99.999 13.043 0.352 99.999 99.999 5.111E-01 4.848E-02 7.013E-01 7.751E-02 3.718E-01 7.979E-02 2.750E-01 3.985E-02 -9.999E+02 -9.999E+02 6.972E-01 2.259E-01 -9.999E+02 -9.999E+02 2.434E-02 -9.999E+02 1.084E-16 -9.999E+02 6.320E-01 -9.999E+02 3.466E+00 -9.999E+02 10.54 9.05 4.66 6.90 -9.99 3.09 -9.99 28.2 -9.9 8.9 -9.9 2 0 1 0 2 0 2 0 0 0 0 320 -9 0 -9 12 -9 12 -9

:

:

To load this data into Houdini, we stripped the header and column-titles from each file, selected the 7 columns we wanted from the 79 columns in each file, then pasted them all together into one "super" file. The resulting file simply looked like this. Only the first part (the "head") of the textfile is shown below - 10 lines. The complete file has 36.2 million lines. It's in the simple Houdini ".chan" file format, which is a plain-text file with a consistent number of space-separated values per line. In this case 7 values per line. Data in this format can be read into Houdini with a FILE CHOP. There are many other ways to load data into Houdini as well.

Extracted data file: allSouthSpitzer.chan

/da/proj/spitzer/data $ head allSouthSpitzer.chan

0 0.036 0.035 0.032 0.072 99.999 99.999

0 0.044 0.025 0.036 0.074 99.999 99.999

0 0.041 0.039 0.042 0.089 99.999 99.999

0 0.043 0.038 0.032 0.110 99.999 0.262

0 0.033 0.042 0.038 0.071 99.999 99.999

0 0.046 0.021 0.041 0.064 99.999 99.999

0 0.043 0.021 0.032 0.079 99.999 99.999

0 0.086 0.051 0.060 0.079 99.999 0.347

0 0.189 0.317 0.303 0.131 99.999 99.999

0 0.028 0.027 0.039 0.065 99.999 99.999

:

:

:

Why is this important?

There’s a few things to learn from this visualisation. Besides the primary task, looking for Masers, it demonstrates Data Precision, and illustrates the opportunity to make a Visual Discovery of an unknown-unknown.

This visualisation from the Data Arena was shown at a Big Data workshop, held at the University of Tasmania. In particular, it uses a computer graphics technique called a Shader to compute the distance from the camera to the data to determine how much data to show. It performs a neighbourhood distance calculation to test the space around each point. For any point, if it is the first point in a region, or the only point, then it will draw, but then after that if there’s other nearby points which will appear ‘on top’ then they’re ignored until there is room to draw them. You can see this ‘sampling’ of the data set in the video for this project. As the camera flies in closer towards the data, there’s more room and so more data is drawn. This is a good way to draw large data sets.

One interesting and unexpected discovery from the visualisation is that it is possible to see the precision in the data. While the level of precision is already known, it’s an interesting thing to see. If you stop the video in the early section where we orbit the blue stars you will see black gaps appear and disappear, both vertical and diagonal. These gaps indicate we have flown in so close that there is no more data. We have hit the limit of the data’s precision. That is, for floating point values, there are no finer values. This is to do with the amount of digits following the decimal point. Even though the number 0.12345 has more precision than 0.12, there will always be a limit to the precision. After a point, you will begin to see gaps, eg. between 0.12345 and 0.12346 – to fill in the gaps you need to add an extra digit at the end. More precision, like 0.123455555

On the 2nd day of the workshop, participants had the opportunity to ‘pull apart’ the Houdini network which was used to create the fly-though video of the visualisation. The participants searched for other types of stars by changing the Spitzer data columns and the calculation. The present colour scheme simply represents distance from zero, from blue to red. Workshop participants modified the shader to colour the dataset differently, based on other data.

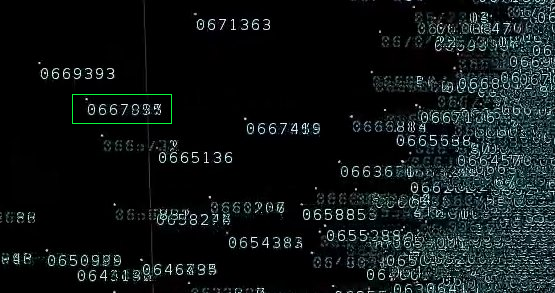

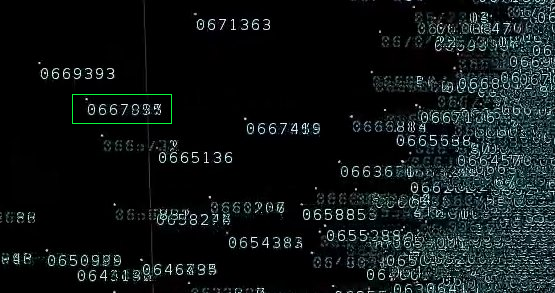

One participant changed the shader to show the star’s ID. Instead of a coloured dot, we now saw the index into the Spitzer data set for that star. This would allow us to see the ID’s for the stars which were red; the candidate masers.

When the movie was played back we saw long strings of digits. The numbers had 6 or 7 digits in them because there really were 36-million data points. You see star ID’s like 0669393 because there really is 36-million data points in the data set.

However, in doing this we noticed something else. If you play this second movie you will see it. Some of the digits in the ID’s are messed up – some entirely, some not at all, some numbers are half-ok, — only a few digits are scrambled.

Puzzling. See the ID which looks like 0667$%%?

If you look carefully, you can see numbers written on top of one another. There's duplicate values! Imagine the number “1234” written on top of “1235”. The “123” would look ok, but the “4” and “5”, overlapped makes some odd looking digit. That’s what we are seeing. There's overlaps somewhere.

Not long after seeing this we checked the dataset for duplicates. It's not hard to do. We just weren't prompted to look for them.

In Linux, we piped the large file into “sort”, then removed the duplicates with “uniq” and then used “wc” to see how many stars remained:

$ wc allSouthSpitzer.chan

36242329 253696303 1451773147 allSouthSpitzer.chan

$ sort allSouthSpitzer.chan | uniq | wc

9461733 66232131 369697853

What these 2 commands show is firstly the word-count for the data set. It has 36242329 lines. The seconds command shows, once the file is sorted and duplicates removed there are only 9461733 lines. This is quite alarming. This shows 73% of the lines in the file “allSouthSpitzer.chan” are duplicates!! There are only 9.4 million unique stars.

It’s a very simple check. Only takes 30-seconds – but no one was prompted to check for duplicates because no one suspected they existed. This was the unknown unknown, and some very useful information to know if you are using this data set!

The reason duplicates occurred in this case is because the large data set was made by the simple concatenation of all of the 360 Spitzer GLIMPSE Survey files being served from the Caltech website. They were all downloaded then joined into one big file. The problem was each file of 100,000 stars overlapped with its neighbouring file. They’re like tiles which overlap.

If there was a star in the middle of a file, then it would not be in the overlap, and when drawn on the screen it would appear ‘clean’. But if the star appeared near a tile-edge, then it most likely appeared in the next file too, and when number all stars from file to file, the neighbouring star ID would be a number pretty close to the first one. This is why the star ID’s were partially similar.

Imagine searching through 36 million entries, when only 9 million are unique. You would not notice this if you worked on one file at a time. It was only when we combined all 360 files together into 1 file that we noticed the duplicates.

The new Caltech GLIMPSE website has maps which indicate these tiles and their overlaps.

The moral to this story is sometimes it’s worth putting your data on the screen. Even when you work with it everyday and think you know it thoroughly. It’s amazing how quickly you can see new things you weren’t expecting.