Videos and Movies in the Data Arena

Quick Notes

- The Data Arena can display nearly any kind of regular video file

- Videos can be resized and positioned around the screen, like a desktop computer

- Video does not need to be 360º wrap-around. It can be placed anywhere on screen.

- Videos can be placed along-side other media, or replicated for effect.

- It's a regular "computer desktop" - just very high resolution.

- the usual web-browsers (Firefox, Chrome, etc) all display on this desktop.

- We have the usual video player applications for movies (FFmpeg based mpv, mplayer, vlc, and bino)

- The primary limit is screen height - 3840 pixels ("px") in the vertical direction

- the screen is almost 4m high (pixels are approximately 1mm square)

- The full 360º display is very wide (aspect ratio is: 74:9)

- standard aspect ratios (e.g

16:9) only fill a portion of the screen before height is cropped

- standard aspect ratios (e.g

- For a best experience, create a custom-formatted video for our display

- For regular monoscopic (no 3D glasses) videos, our new 2024 resolution is 31550 x 3840px

- If this size is too large to render, you might want to try a half (15775 x 1920px) or one-third resolution (10516 x 1280px)

- For stereoscopic videos, our your video resolution would be the same but with double height for top-bottom stereo

To transfer videos to the Data Arena, see this F.A.Q → "How do I transfer my data?"

Note: the information below was written for our previous display resolution (pre-2024). Our new resolution is much larger, and our aspect ratio has changed slightly (our display is now taller than before). Videos created at our previous resolution still look fantastic and are slightly letterboxed (thin bars at the top and bottom).

The info below is still relevant, just keep in mind the new resolution above.

The Data Arena displays high-resolution 360-degree 3D-stereoscopic movie content with 16-channel surround sound. The screen resolution is “30K” Stereo (30K each eye). Standard HD TV is 2K.

This immersive environment is an ideal venue to conduct a client review or launch event for VR movie projects. Please contact us to enquire further or to arrange a booking!

How to prepare your video

There are so many movie formats. The Data Arena is able to display most of them, with varying amounts of setup. It really depends on the sort of movie. If it’s a standard HD movie (eg TV resolution) then it’s pretty straight-forward. However, the Data Arena can display much more, including 3D Stereoscopic 360-degree Panoramic Movies, and movies designed for Virtual Reality (VR) Head Mounted Displays (HMD). Standard movies just play.*

HD "2K" Format

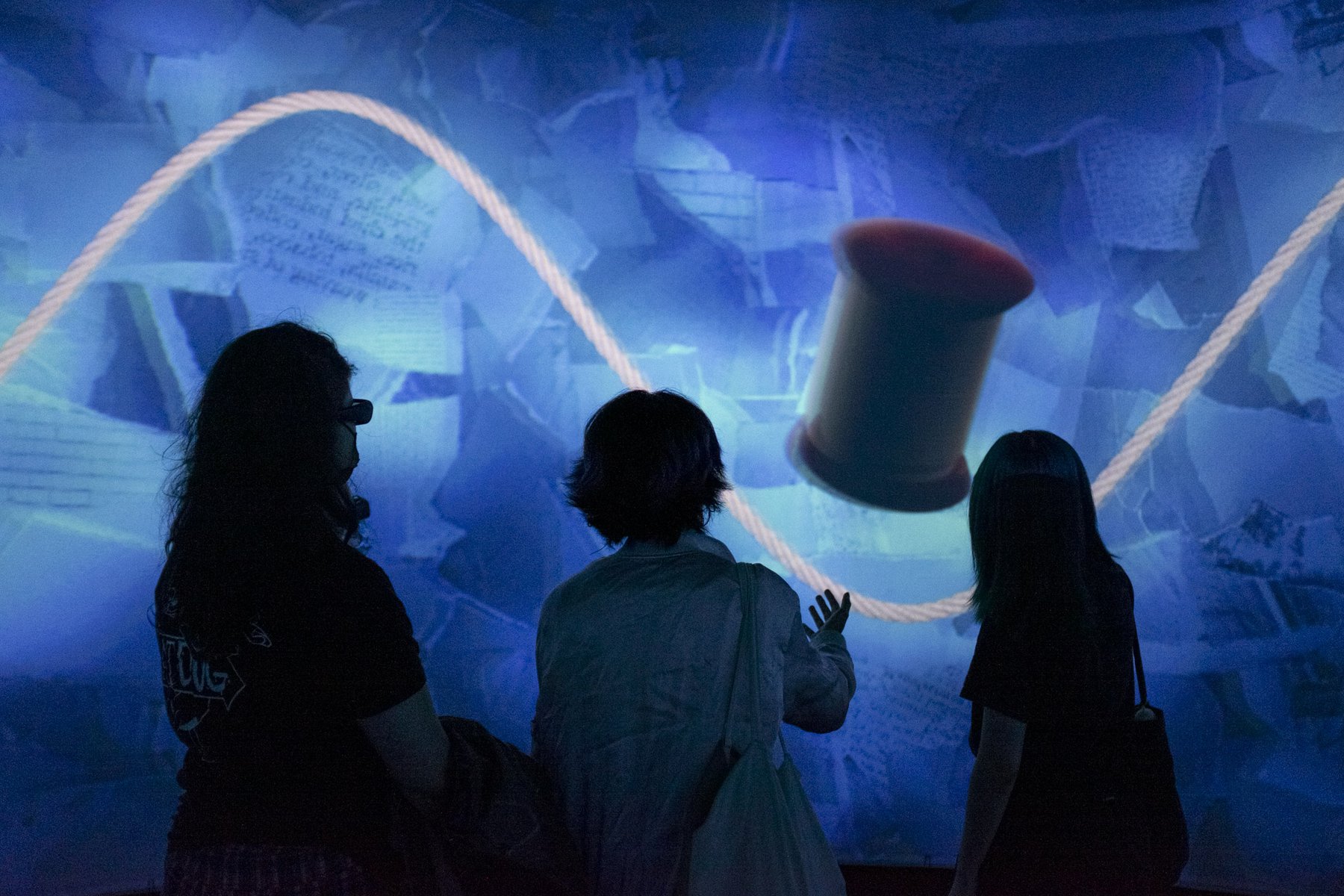

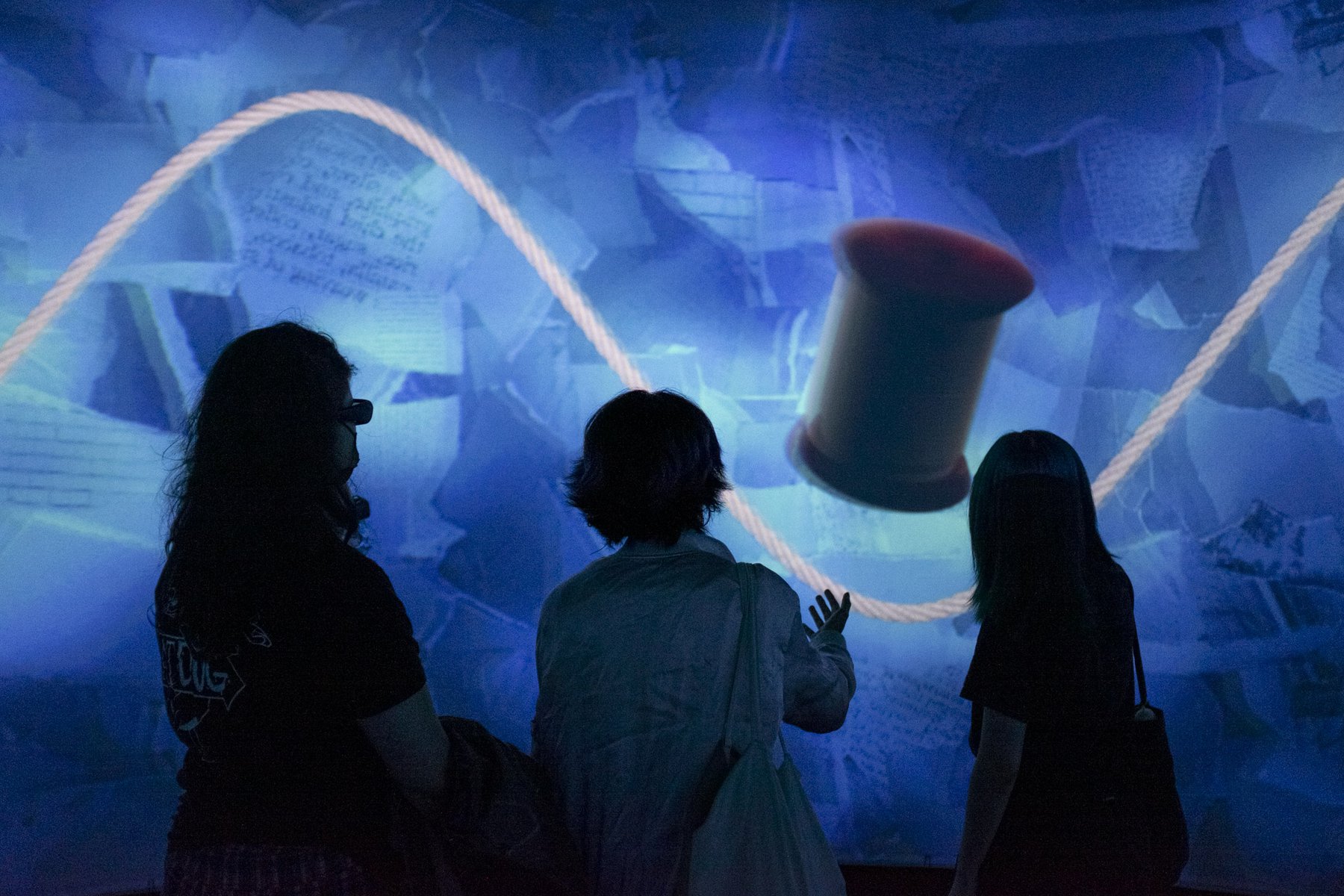

The most common HD formats easily play in the Data Arena. One mode we like to use plays 6 copies of the same movie, replicated around the 360-degree screen. With the audience standing in the middle of the theatre, each person typically has a clear view to one of the copies. The left/right sides of the movies are blended together (approx 100 pixels each side). If you’ve been in the Data Arena you might have seen some movies played in this format. For example, the bacteria visualisation movie is shown in this way.

Standard HD Format Movies include: 1920×1080 or 1920×1200 pixels Movie Codecs: mpeg2, mpeg4, h264, quicktime, webm

The Data Arena uses FFMPEG as the base library to handle multimedia data. This is great because the FFMPEG library decodes most movie formats.

Movie players which use ffmpeg include: mplayer, vlc, and bino-3D. These movie players are used in the Data Arena.

Odd Shaped Movies

The movie does not need to be HD format 1920×1080. The Data Arena can play almost any resolution, and scale the movie to fit on screen. The main consideration when scaling is the DA screen height: 1200-pixels. So, a movie which was (say) 400×300 pixels in it’s “normal” size, could be scaled to full-screen 1600×1200. This scaling can happen automatically at run-time. No preparation is required.

However, if there’s some alternate scaling desired, then sometimes it’s better to bring the movie in ahead-of-time, so that it can be adjusted to suit. For example, we might crop the movie, or set a letterbox format.

Some Apple Quicktime MOV files (MOOV Files) can be a problem

Movie files can be in various “container” formats. These containers contain audio/video data encoded in various “codec” formats. Some quicktime movies use a proprietary file “container” called MOV (a proprietary compression algorithm introduced by Apple in 1998). The display of movies in this format can sometimes present a problem.

When we find Quicktimes using MOV, we use FFMPEG to convert it to an open format container, like MP4. The conversion is fairly fast (usually comparable to regular playback speed). If you are looking to transcode a movie, handbrake is a good choice (it uses FFMPEG). These days MOOV files are considered ancient. Time to transcode!

ffmpeg reports “moov atom not found”

The Moov Atom problem is something a little different, and can be another cause for difficulty – it’s usually due to a corrupted/truncated movie file (a bad transcoding). Essentially the Moov Atom contains all the information about the video; details such as the duration, tracks, frame-rate, codecs, encoding, etc. Keep in mind, for a live recording from a video camera, some of this info is not known until the end. For instance, the duration. In some cases, such as video streams, the duration is unknown. The larger videos become, the more often there’s missing data or corrupted files.

Check a Video

There are a number of ways to check a video is ok, and to gain information about it. ffprobe (from ffmpeg is a multimedia stream analyser. There’s an enormous amount of information about ffprobe here.

Mediainfo will provide quite detailed information about a video. It will show the codecs used, the duration and speed, colour space, audio channel information, and resolution (width & height). In Linux, “mediainfo” is a command you can run on the command-line. In the DAVM, open a shell (Konsole) and type: mediainfo.

To fix a video file, sometimes it’s a simple matter of transcoding it with ffmpeg. eg. Try something like this linux ffmpeg command (change .mkv to .mp4 or .mpg as appropriate):

ffmpeg -err_detect ignore_err -i badvideo.mkv -c copy video_fixed.mkv

Handbrake

Essentially, we are all using the same Open Source library libav to process audio/video data. Though libav is too low-level for most people, so we use ffmpeg at the linux command-line. On other systems (Windows/Mac/Oh Linux too), handbrake provides a nice graphical interface to ffmpeg.

4K Video

The Data Arena is a 10K display. There are a number of ways a 4K video can be played. It can be “doubled” so the video appears twice, on the east-side and west-sides of the theatre. It can also be stretched around the theatre (which naturally requires some letterboxing). It can be placed in the middle of the screen, and black applied elsewhere. With some setup time, even parts from a movie can be segmented and replayed on various regions in the theatre (this is a bit more advanced; will require a special Equalizer .eqc file created for bino). Regular 4K video playback is easy, and we have various set-configurations ready to go.

VR HMD Movies

Movies designed to play in a Virtual Reality Head Mounted Display (VR HMD) can be played in the Data Arena. The great aspect here is instead of the movie being played to one person (the one wearing the HMD), and audience of 20 or 30 people can all see the movie together, on the Data Arena’s cylindrical screen.

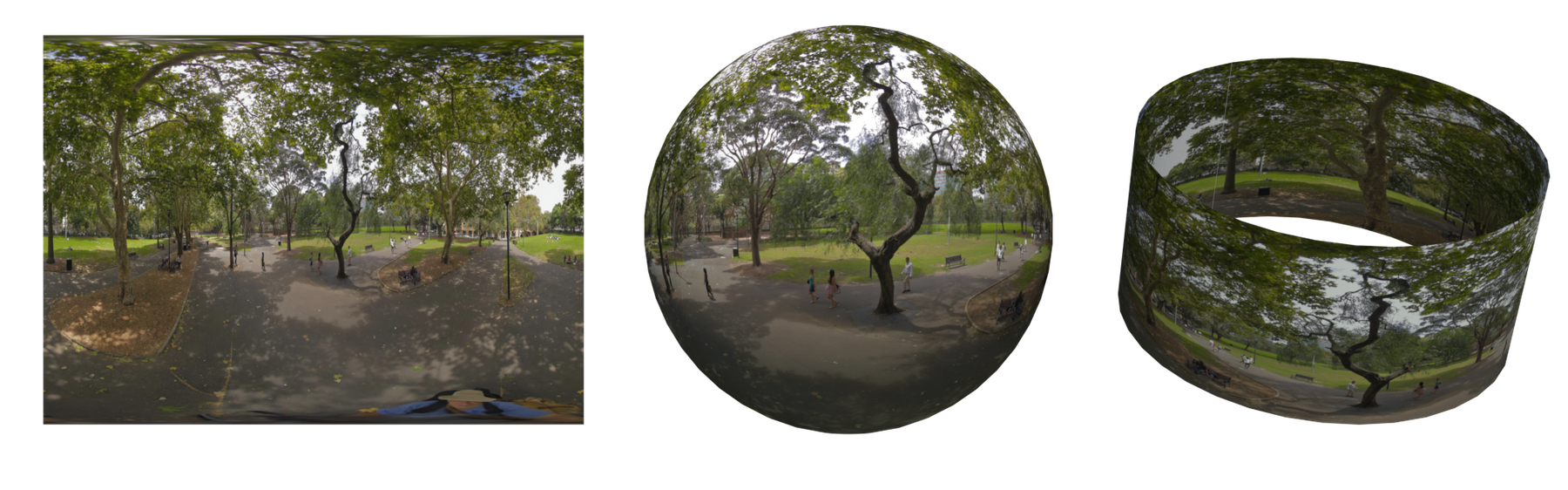

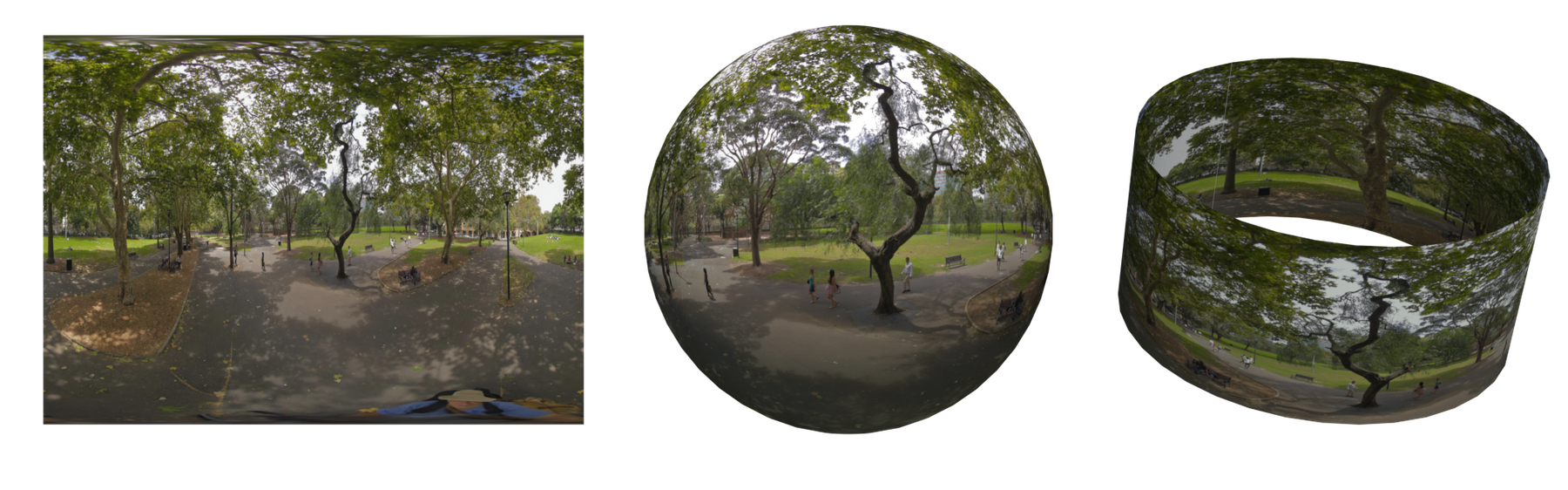

VR movies are typically mapped to the inside of a virtual sphere. The viewer’s Point-of-View is from the centre of this sphere. As the viewer looks around, up and down, parts of the sphere are mapped to the Head Mounted Display.

In the Data Arena, this sphere of video is transformed to a cylindrical mapping. Then, a slice from this cylinder is displayed on the Data Arena’s cylindrical screen. This allows a group to all see the video together. Instead of needing to looking around with a pair of (selfish) TV’s attached to your head, the full-360 is there on the screen.

In addition, the live horizontal position of this cylindrical slice can be moved up/down interactively, via the Space Navigator.

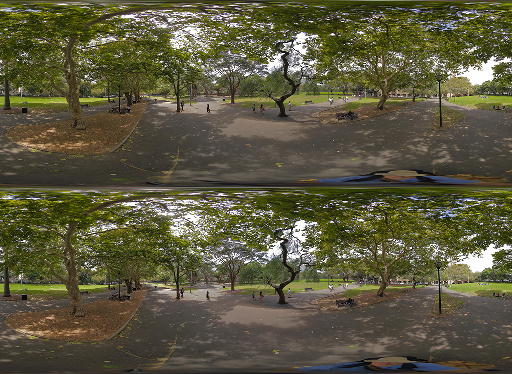

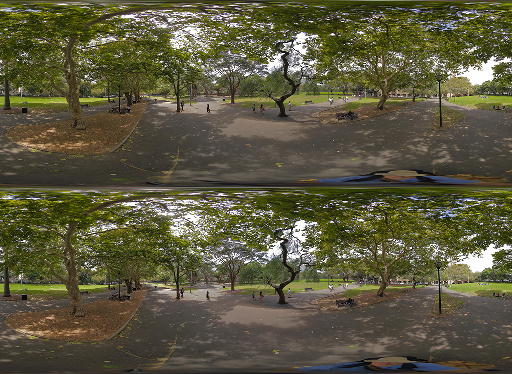

(Above) Spherical Image (left), mapped to a sphere, and a cylinder

A Stereo Video format is the same as this, but with 2 images; one for each eye. The images are expected to be in Top/Bottom format (see Panoramic Movies, below). Videos where the 2 images are in left/right stereo format can also be handled, but Top/Bottom is preferred.

(Above) Top/Bottom format (preferred)

(Above) Left/Right format

Panoramic Movies

Regular Monoscopic

The Data Arena’s resolution for a panoramic monoscopic movie is 10576 x 1200 pixels. Your movies should be rendered at this output resolution in order to fill the entire 360 degree screen. There is no need to render overlaps or distort the image. A monoscopic movie is 1200 pixels high. Most regular movies are monoscopic (eg TV).

(Above) Panoramic Movie

3D Stereo Panoramic

Stereoscopic movies have seperate images for the left eye and for the right eye. There are many different stereo movie formats in the field. The Data Arena prefers to display movies which are in the simple “top/bottom” format. This is where the image for the left eye is in the top half of the image frame, and the right eye image is in the bottom half.

Instead of being 1200 pixels high, a top/bottom stereo format movie is 2400 pixels high. It’s simply two panoramic movies on top of each other. 1200 + 1200 = 2400 pixels. The image is basically bigger, vertically. The stereo movie resolution is: 10576 x 2400 pixels.

(Above) Panoramic Stereoscopic Movie (top/bottom format)

There’s a number of benefits to top/bottom stereo format. Firstly, the display timing is much simpler because both left and right images are available at the same time. The system can’t miss. Subsequent synchronisation with the 3D Active Shutter Glasses (worn by users) is therefore reliable.

Sliced Movies

When the movie duration is short, or only a low resolution is required, the movie content may be output directly to a movie file format such as .MKV or .MP4 and imported into the Data Arena for immediate playback.

There is a pipeline to help process high resolution panoramic movies. It's best to let us do the 6-Slice on the cluster.

The Data Arena is actually 6 video projectors connected to six separate computers. If you think about it, this means that computer number 3 (say) only plays 1/6th of a panoramic movie. It ‘throws away’ 5/6th of every frame, because it only wants to display its portion of the 360-degree movie. When panoramic stereo movies become very large, due to fine detail or low compression, we sometimes decide to slice a movie into 6 pieces. That way, each computer only plays the bit it needs. This reduces the amount of disk I/O by 6-times!

If the movie needs to be sliced vertically, then having the stereo format in top/bottom format means we only need to do one cut. Both the left and the right sections stay aligned. It’s much simpler to do, than if the movie was in the more-typical left/right format. Top/Bottom format guarantees each slice maintains the correct left & right stereo disparity.

(Above) Optional 6-Slice Format for better I/O

Longer movies rendered at the maximum display resolution (with high detail, low compression) will usually require additional post-processing before they are ready to play. This is the 6-slice processing, performed offline/overnight in Nuke. In this scenario, the movie should be output as a sequence of individual frames in the .EXR or .PNG file format with a separate audio track supplied as a .AIFF or .WAV file.

For example, if the movie runs at 25 fps, then there would be 25 image files per second of playback. Image filenames are typically named something like: myMovieName.00001.png, myMovieName.00002.png, …

Please note the slicing process is really only for large panoramic movies. It’s not always necessary. A movie with a bitrate less than 250Mb/s will play fine, unsliced.

If you have access to a compositing software package such as NUKE, it may be possible for you to perform the necessary post-processing steps yourself. An example NUKE gizmo demonstrating how to convert the movie content into a series of six overlapping slices suitable for playback on the Data Arena projector array, is included in the DAVM under the directory /local/examples/nuke. This gizmo will convert a sequence of full resolution stereo frames into six individual sequences, which may then be independently encoded into movie files.

Please ensure that you allow sufficient lead-time for us to be able to prepare your movie for optimal playback. We also recommend that you arrange one or more display tests prior to the main event so that you have the opportunity to evaluate and apply any colour or grade adjustments that may prove necessary due to the specific display characteristics of the Data Arena projector array.

Update: It's better to [bring]https://dataarena.net/dive-in/faq#167) an unsliced movie in to the DA to be sliced on the DA Cluster. That way it runs in parallel (faster). Another good reason is each video slice is then encoded with the same ffmpeg codec/libraries which decode the video when it plays.